2026 Video Productivity Revolution: How GPT-Image-2 × Seedance 2.0 Makes the "One-Person Studio" a Reality

I. Beyond Realism: Why "Cross-Model Controllability" is the Tech Dividend of 2026

If you are still searching for the best GPT-Image-2 online free tool just to marvel at AI clarity, you are missing the true revolution. In 2026, the focus has shifted from "resolution" to absolute controllability.

Recently on X, top-tier creators have demonstrated a formidable GPT-Image-2 x Seedance 2.0 workflow. By combining GPT-Image-2 (defining the high-fidelity visual) with Seedance 2.0 (defining the physics of motion), you can now complete a AAA-grade game trailer or a high-converting e-commerce ad on a single canvas.

After stress-testing this AI image-to-video workflow with hundreds of clips, I’ve synthesized a Standard Operating Procedure (SOP) that bridges the gap between amateur creators and professional directors.

II. Deep Dive: 4 God-Tier Use Cases (Real-World Benchmarks)

In 2026, if your generated videos still look like "melting jelly," it’s because you haven't mastered the synergy between GPT-Image-2’s texture definition and Seedance 2.0’s physical laws. Here are four elite scenarios from my personal practice:

1. AAA Game Demos

Tactical Tip: Use GPT-Image-2 to generate high-fidelity game assets and action storyboards, then use Seedance 2.0 for scene rendering. The integration is seamless.

The Workflow: This "instructional" generation method allows Seedance 2.0 to perfectly recognize the sequence of actions. For developers looking for GPT-Image-2 API pricing, this integrated pipeline significantly reduces the cost of cinematic pre-visualization.

2. Anime-Style Production

Tactical Tip: Utilize GPT-Image-2 to generate component illustrations and cell grid layouts as "action blueprints." This helps the model clearly understand the chronological order of the scene.

Prompt Share: > [Subject]: A young woman baking croissants. [Grid Layout]: 3x3. [Theme]: The process from raw dough to the final golden-brown flaky crust. [Atmosphere]: Warm, nostalgic, cozy. [Style]: 80s anime style, soft pastel palette, hand-drawn texture, soft lighting, slight film grain.

The Workflow: This "instructional" generation method allows Seedance 2.0 to perfectly recognize the sequence of actions. For developers looking for GPT-Image-2 API pricing, this integrated pipeline significantly reduces the cost of cinematic pre-visualization.

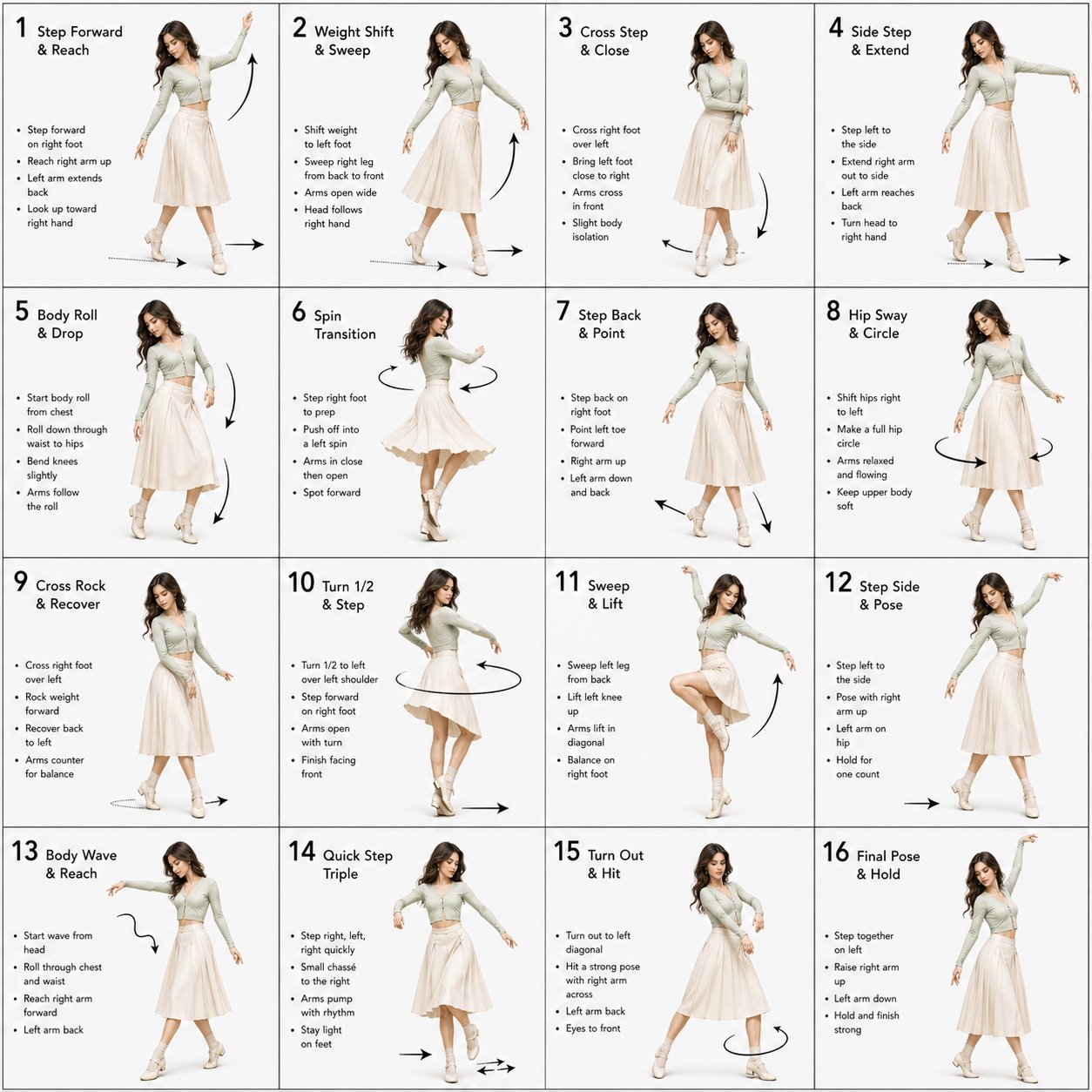

3. Action Map Reference: Complex Dance & Motion

The Workflow: Upload an Action Map as a reference. GPT-Image-2 generates a 3D-textured master frame, which Seedance 2.0 injects with "dance dynamics."

Prompt Share: > [Instruction]: Dance sequence guide. [Visual Style]: 3D rendered female dancer. Professional choreography guide layout, technical and diagrammatic. Clean white background, studio lighting, high contrast. [Grid]: 4x4 structured grid (16 frames) separated by thin black lines, numbered 1-16. [Character]: Consistent female dancer based on image1. [Clothing]: Form-fitting top with a flowing skirt. Fabric should subtly react to movement with realistic folds and flow.

Performance: Even in high-amplitude dance moves, the hands and ankles remain anatomically intact. This is the gold standard for consistent AI character video.

4. E-Commerce: Killing the Professional Studio in 5 Minutes

The Scenario: Creating a commercial for "Artisan Kimchi."

The Workflow: Generate 4 distinct flyer concepts as video starting points within GPT-Image-2 on the same canvas.

Value: Static images go in; dynamic ads come out. The physical engine makes fabric drape and food textures look incredibly appetizing—killing the need for an expensive physical studio.

5. Nostalgic Vibe: Recording a "Kenting Trip"

Prompt Share: > A collage of 12 photos of a young Taiwanese couple traveling in Kenting. The photos feel casual, as if taken with a mobile phone, with a nostalgic atmosphere.

Dynamic Upgrade: Seedance 2.0 successfully recreates the subtle "handheld" jitter of a smartphone, turning a static collage into an emotionally resonant travel vlog.

III. Pro Tips: Secrets for GPT-Image-2 Prompt Engineering

After refining my GPT-Image-2 prompt engineering guide, I’ve identified the three "must-haves" for a masterpiece:

Lighting Anchor: Always include

stable studio lightingin your prompt. This provides a visual anchor that prevents the erratic "color flicker" during video generation.Hand-Fix Strategy: Minor flaws in the master frame are magnified tenfold in video. Use the In-painting feature on GPT Image Tools to fix hands before you hit the "animate" button.

The Golden Rule of Text: GPT-Image-2 is famous for AI text rendering. Generate your brand logo in the static image first; Seedance 2.0 will then keep that text stable and legible throughout the motion.

IV. The Exclusive "3-2-1" AI Video Production SOP

If you want to achieve professional results, follow this exclusive workflow:

3 Layers of Prompting: Visual Layer (Textures), Physics Layer (Gravity/Wind), and Emotion Layer (Facial Sync).

2 Iteration Renders: First, generate a 360p draft to confirm motion logic. Then, use the GPT-Image-2 upscale feature for a 4K final render.

1 Localized Loop: Integrate OmniVoice for authentic local accents. The lip-sync precision in 2026 is so advanced that every frame aligns perfectly with the audio waveform.

V. Conclusion: The Barrier to Creation is Gone

Image generation and video generation used to be two separate tracks. The combination of GPT-Image-2 + Seedance 2.0 has merged them into one. This is no longer a distant tech demo; it is a production tool you can use today.

Go ahead—your next viral hit might just start with a single image.

Direct Access:

GPT-Image-2 Online Tools: https://gptimage.tools/